Netstalking

Netstalking is an activity involving browsing the depths and the fringes of the web in search of secret or difficult to access information, due to either not being indexed by search engines or it not being meant to be accessed, and then analyzing it and storing it. Information being searched for may have no value but be aesthetically pleasing or contain shocking secrets, often netstalking is done without a specific goal but with the hopes of finding something interesting.

History and origins

Many would would point to the beginning of the netstalking movement to the Russian anonymous forum of Dvach, 2009 during the year it was shutdown, but the roots of the movement can be traced even further back to the year of 2008, to the spiritual predecessor of the whole movement, project 9-eyes.

Project 9-eyes is a perfect example of deli-search (deliberate search) netstalking, additionally it was also the perfect project to kickstart the netstalking movement, with the dedication and effort from its creator John Rafman who spent 8 to 12 hours a day working on it, plus it is a simple premise: “randomly browsing the Google street view images in search of interesting, bizarre or shocking images that may have been accidentally caught by the Google street view car camera”, and with the wide accessibility to Google street view anybody who was even remotely interested was able to dip their toes into the world of netstalking. Once the images were located, then they would get screenshotted and later uploaded to a website for all to see.[1]

Project 9-eyes is still active to this day.

Sadly the movement didn’t catch on in the English speaking parts of the world, but it did catch the attention of anonymous user on Dvach in and around 2009, the movement was spearheaded by the ISKOPAZI team who mainly found IP cameras around the world, a good example of net-random netstalking, even with these humble achievements it was enough to spark rumors and gossip, igniting interest in netstalking. Eventually when Dvach was shut down the movement spread with the users to other platforms, making sure that it would live on.

Riding upon this wave of interest a book was written “Тихий дом” (Silent House), spreading the interest about netstalking even further by creating a map of network levels where mystery and unimaginable secrets dwelled at the bottom just waiting to be discovered by a lucky netstalker! An almost call to adventure, a challenge for the netstalkers! With this newest wave so called organizations started to appear, the most famous of which was “Amnesia”, an organization created in 2012, it gave rise to many famous and scandalous stories on 4stor, a website in the vein of Dvach.

Sadly it was later revealed in an open letter from Amnesia that all the information that they claimed to have found while netstalking was fake, most of what they told the public about was mostly for attention. Interestingly enough a similar event occurred with the book “Тихий дом” (Silent House), the author said that he had gotten tired from maintaining and developing the story, he announced that everything was fiction. Though rumors of them never did stop and still circulate the forums occasionally.

Though a movement can’t be sustained by fake rumors and publicity-stunts, netstalkers found many hidden treasures of the internet like: unique sites, net-art, ARGs. Sadly the wave wasn’t able to sustain itself indefinitely and around 2015 interest started dying, some say that due to the exhaustion of all the easier to find hidden gems of the internet the technical skill requirement went up to find anything of interest, resulting in a higher entree barrier. Since 2016 the community has been pushing to become more accessible for newbies, group chats have been created, with the main one being the “Collection Point” that is still active, and numerous conferences. This made the community more accessible and provided an influx of new blood to the movement.

Nowadays the netstalker movement has begun to focus on network archeology and little-known networks, the art of working with search engines, the possibilities of machine analysis of large layers of information.

Morality

While lacking a leader or an official organization, a certain unspoken ethical code has developed over the years, for example: ruining the discoveries is forbidden, do not “hurt” the owner in anyway and other common sense rules to preserve findings for the future, nobody could really enforce these rules but your reputation would be ruined, though how much of a threat that really is, is questionable as almost anybody could just make a new account and ditch their ruined reputation.

The line between a skilled netstalker and a skilled hacker might just be their goal in obtaining the data they are after, so it isn’t unheard of that netstalkers would sell the data that they recovered, but that is frowned upon in the community. There is also the factor of safety to consider, what if you stumble upon information that should stay secret, for example some thing related with the mob, and if you do happen to sell it or publish it, you might have to fear for your safety, that is another reason for some of the unspoken rules in the community.

Overall, if the community needed to be assigned a hat color, then it can be said that the community of nestalking is a white hat community as they would report unsecured sensitive date and back-doors to servers, but like in every community there are outliers so the community shouldn’t be judged based on them.

Open Source Intelligence

Open Source Intelligence’s or OSINT main idea is collecting publicly available information that can later be used either for malicious or testing proposes. In this context open source does not necessarily mean Open source as many would understand from software or other aspect, but rather that information that is collected is publicly available to everyone. Everyday information that people post or share on social media can contain some very valuable details that they do not even realize. Although this technique is mostly used by organizations to test their systems, but it does not mean that hackers do not take advantage of it too.[2]

History

OSINT dates back to military and intelligence services somewhere around WWI (before technologies were in everyday use). Back then a special task force was made to gain information about assassination attempts and other political and crucial information. Only then they used newspapers, journals, press clippings, radio broadcast reports, searching for some photos that would give away some information about the enemy, because as William Donovan stated “Even a regimented press will again and again betray their nation’s interests to a painstaking observer”

. But switching from gaining information from opponent’s mail or phone tapping to publicly available databases could mark the beginning of OSINT as we know it today. As technologies evolved and became more widely used people started to get in the habit of posting everything they were doing online. And even if then part of the society started to understand what this could mean, the first big realization that social media could be used for collecting useful information came from 2009 Iran’s “Green Revolution”. Protesters were using social media platforms as a way to express themselves and everyone across the world could see what was happening. This was the first-time internet was full of political content and insights, 60% of all blog links on Twitter were about Iran during the first week of protests. After this, it was only a matter of time when everything would end up on the internet. As a good example of how powerful OSINT has become, would be an article about how the US managed to destroy Islamic State bomb factory only 23 hours after a member of a terrorist group posted a selfie where the rooftop of a building could be seen.[3]

Usage

OSINT is a very important in monitoring all the information that is posted over the internet and it includes three main tasks that need to be fulfilled. In order to do so there are many tools that have been developed, but mostly all of them fulfill the same three functions or at least some of them:

- Discovering public-facing assets – recording information that is publicly available and possess threats to organization if is inspected more closely.

- Discover relevant information outside the organization- finding relevant information outside of organizations network (social media posts, domains and locations).

- Collate discovered information into actionable form- collecting all the data and making it somewhat presentable or easier to deal with

Moreover it’s techniques can be divided in two categories- active and passive. Active involves direct contact with your target, more reliable results, high risk of detection. Passive- contact is based or third-party services, may include false positives and noise, low risk of detection. OSINTs widely used functions are:

- Monitoring personal and corporate blogs

- Review content that is posted on social media

- Access old cached data from Google

- Identify mobile phone numbers and email addresses

- Collect employee full names and personal information

- Search for photographs and videos on sharing sites like Google Photos [4]

However, when using OSINT, a really important step is filtering out the information that can be actually used, because the collected data all together could be too much to prepare for analysis and presenting. That would also include the last step of OSINT investigation, which is translating gained data to human-readable form.

Risks

Although information used is publicly available there are some risks but usually they are ignored. Using direct contact option a person might get detected and that could lead to losing access to the target's information, as they would try to hide it by shutting down profiles on social media or deleting data. Also this could result in the person using OSINT becoming the victim or endanger his own organization as the target would probably become interested in his “attacker” and do his own research.[5]

Laws

As stated previously the data that is being used is public, so technically it should be legal to perform OSINT investigation, but here civil rights and liberties start to become important. One of the main things that investigators need to keep in mind is society's opinion about what their data is used for, so integrity and high-level ethics should be taken in serious consideration.

No matter for what reason this investigation is done there should always be a set of tactics, techniques and procedures that are used to ensure the compliance with laws and get best results that could be used later on. However, depending on who uses this technique the laws that need to be taken in consideration changes.

In law enforcement their main goal is not to endanger the possibility to use this data in an investigation, which means if it feels like warrant is needed it is for the best to get one. For corporate security teams this is a bit more complicated. If the organization intends to later pursue legal action, then everything needs to be collected legally. In this case the main goal is to avoid gained data being discredited in legal case for wrongful gain of information. It is important to remember when dealing with so much data it can become determent where it came from. For example even information gained from public Facebook post could might not be used in legal cases as Facebook Terms of Services go against one of the main points of OSINT, which is: The main qualifiers to open-source information are that it does not require any type of clandestine collection techniques to obtain it and that it must be obtained through means that entirely meet the copyright and commercial requirements of the vendors where applicable. -Mark M. Lowenthal

. That means that information that has been collected through OSINT investigation should be thoroughly filtered not only on its importance but also the source in order to be usable in legal cases.[6]

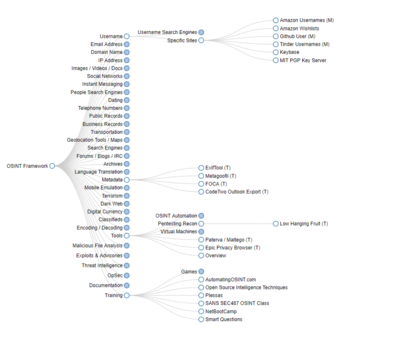

OSINT Framework

Netstalking Tools

Over the years many tools, many tools have developed which the Netstalking community adopted as their mainstay, these range from open source fan projects to professional OSINT tools with subscription models, all of which fall under use-cases of Netstalking.

Most popular tools

- Matagoofil

- SpiderFoot

- theHarvester

- Recon-ng

- Searchcode

- Babel X

- Maltego

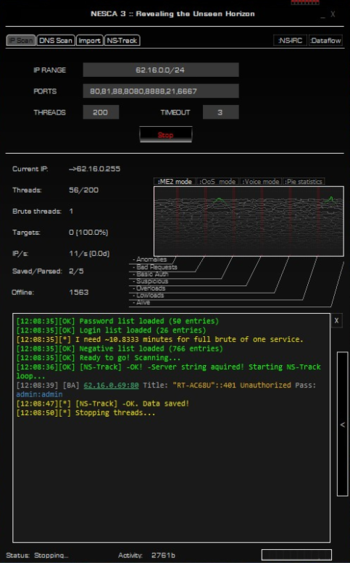

Nesca

Nesca is a Network Scanning tool, used to scan IP addresses, ports associated with said addresses, as well as do minimal bruteforcing on the found protocols. It was created by the group “Iskopazi” (Russian “Ископази”). The group itself was founded around the year 2010, and the sources claim that the key to original version of Nesca was available on the imageboard d3w.org - /b/ board, which, by 4chan standards, is probably a random board. The link right now is dead and neither can archives of the site be found, unlike 4chan.[9] This unfortunately means that we don’t have a clear date of nesca’s publishing, but the repository with earliest commits can be dated to 8th of August of 2012.[10]

One detrimental feature that it had in the past, was that it used to send all the scanned ports and usage data to d3w.org[9], but since the source code is widely available now, that feature seems to be optional. It was also suspected that Nesca was a possible trojan vector[9], but according to the most recent github readme, a partial audit was done on Nesca, and it can be considered as safe as anyone considered any application on which a partial audit was done by some Russian guy.[11] From this information we can infer that the date that we have above - 08/08/2012 - is probably a later publication than the original release, because suspicions wouldn’t have been so rampant about the source code. At any rate, hackers shouldn’t be worried about application’s security when the source code is right in front of them.

Features

For the design of its time, Nesca has a very “hackery”-y design, and comes with several features, most of which we already mentioned - that would be Scanning IP address and port combinations, and bruteforcing them. One more function is scanning DNS addresses and port combinations and bruteforcing those - which is essentially the same but can help by saving time on lookups, plus a lot of sites have API or other endpoints associated with same IP as their website, due to old-school monolithic design of sites, and general hosting costs. It also needs to be stated that during the earliest versions of Nesca, microservices architecture wasn’t nearly as ubiquitous as it is today.

As already mentioned, Nesca does bruteforcing on our behalf. This functionality can be adjusted in several ways - IP addresses can be read from a file or inputted directly, number of threads which will be used to bruteforce the logins can be adjusted, and the login/password sheets that we can provide to it. How are we doing this? Number of threads is pretty self-evident - it’s right there on the interface. IP address range is quite an easy parameter to give - just give start and end addresses of the range, or input IP address ranges separated by comma. As mentioned, a list can also be imported through Import->Import&Scan, through which we can choose a .txt file in which the IPs will be listed. Passwords list can’t be edited from the application, but looking at the contents of the repository, after some head scratching and inspecting the code, it can be safely said that files in the “pwd_lists” directory, such as ftplogin.txt and its complement - ftppass.txt, can be edited to include relevant usernames and passwords.

The interface of the tool also has several nifty features for discovery analysis: ME2 mode shows the frequency of several types of addresses discovered: Cameras, Basic Auth, Other, Overloads and Alive connections. This seems like a bit lackluster, however QoS and Pie Statistics mode also provide information on the amount of SSH hosts. While this might seem interesting, SSH is often secured by public keys, which can not just be bruteforced by some tool, hence it makes sense that SSH part was ignored, and only cameras and ftps, which are understandably insecure, are actually considered as targets - most of the search results on “как использовать Nesca” (“How to use Nesca” - Russian, because the tool is not as popular outside of Post-Soviet lands).

One more interesting and probably more important feature: Nesca also generates a hefty report HTML file in the same folder it is run in, complete with the same style of interface as Nesca itself. This helps us not scan the whole IP address range again every time we want to dig for information. Final use-case of Nesca, which is probably the most used one, considering what segment of Russian population does hacking for fun with third party tools, is leaving it overnight to do its job, and coming back the following morning to collect the spoils.

What Nesca lacks for being more than just a hacking-as-a-hobby tool, is a CLI, through which it could be deployed to several devices, through which more sophisticated and evenly spread scans could have been executed, as well as updated UI and ungodly degree of incompatibility with linux - it completely ignores the maximum height of the screen and refuses to be resized.

Nesca looks like a very old tool, even though the audit was done two years ago and some Russian github dweller decided to pick the tool up and “optimize” it, godspeed to him, the youngest significant contribution to the tool is already four years old. Other than that, the design and intent of the tool gives it’s age away. As already pointed out, it really is a hacking-as-a-hobby tool, because the main use of Nesca, judging first by functionality and subsequently by the traffic that the Russian internet has generated around it, its main use-case is scanning for Cameras or ftps, and then “lurking” there, with the intent of collecting information.

For all intents and purposes, Nesca should not be taken seriously by any serious security researcher, but for Netstalking, it is perfect - Netstalking isn’t just about collecting mass data and analyzing it the Facebook way - to then sell it. No, Netstalking entails in itself collection of data just for the sake of collecting it, and this vividly reflects the activity most engaged by the Post-Soviet working class in their free time - looking out of their windows, silently observing the world, but in a bit more digitalized way.

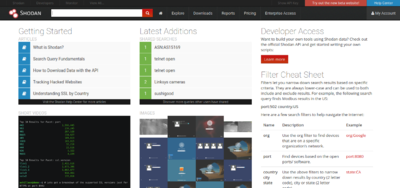

Shodan

Shodan is a search engine and an OSINT tool that simplifies the search and reconnaissance of potential targets. It can search by: IP address, Domain name, geolocation, server type - apache, nginx, open port type, and a myriad of other properties that can be found here. Shodan is by design aimed at developers, data analysts and security researchers who would like to find out which country is becoming more connected, which regions have more vulnerabilities than others, what kind of SQL databases are used in Nicaragua, etc.

This topic will cover the use of Shodan from the perspective of a Netstalker, hence the website interface will be discussed. Two more tools are available for automation and programmatic fetching of data - CLI and REST API, these require subscription and come as a limited resource: 100 searches per each tool per month on for one-time member purchase, more for subscribed users.

Shodan's big advantage over the freely available tools is that it already has a substantial database of scanned IPs, from which it had already received metadata banners and has already run them through search tools, data generated from which can be viewed by regular users such as ourselves, without even knowing about the vulnerabilities of a specific SSL certificate version that some particular server runs, for example. Collected information can be searched based on the contents of the banners that the user is looking for by inputting a query in the site's search engine, by either searching for the data part of the banner, or by applying filters to search for other parts of metadata.

Following is an example of a banner[12]

{

"data": "Moxa Nport Device

Status: Authentication disabled

Name: NP5232I_4728

MAC: 00:90:e8:47:10:2d",

"ip_str": "46.252.132.235",

"port": 4800,

"org": "Starhub Mobile",

"location": {

"country_code": "SG"

}

}

To find banners such as this, we can input several kinds of queries:

org:"Starhub Mobile"

Will find all the devices owned by Starhub Mobile

port:"4800"

Will find all the devices with an open port of 4800.

As is evident, this is a very flexible way of searching for data, but one more thing is also evident: just knowing search queries of Shodan is not enough, an experienced user should know which ports operate which protocols, which SSL certificate versions have which vulnerabilities, what types of servers are there, what kind of OS-s exist to search for them - for instance, ftp can be run on many OS-s, for instance Solaris, which is not the first thing an inexperienced Netstalker thinks of when setting out on a search. In this situation, the Shodan manual suggests that the user look at community queries.

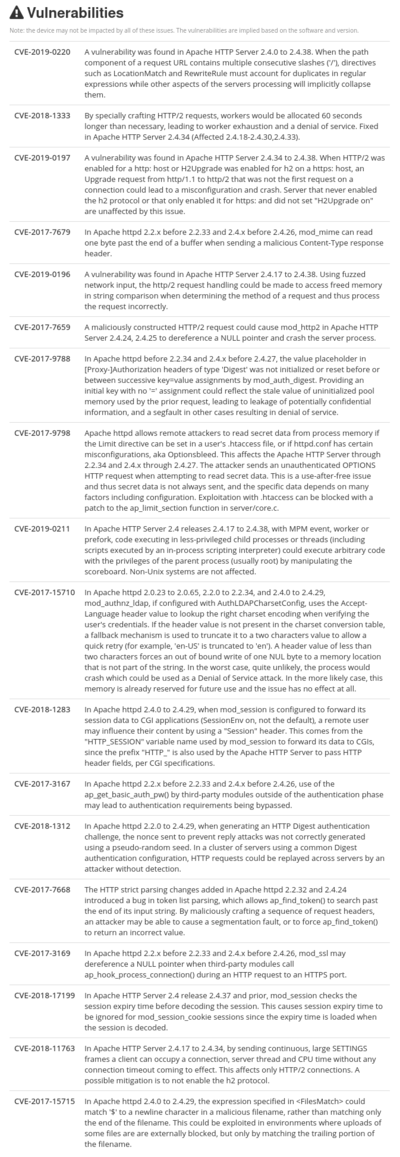

Finding the information

As already mentioned, Shodan also aggregates all the known vulnerabilities pertaining to the software running at a specific IP address. One such list of vulnerabilities is shown on the figure. This proves to be a very lucrative source of information for those with the knowledge to crack the websites, or gain unauthorized entry. From the viewpoint of a Netstalker, this report is invaluable, as gaining unauthorized entry and just looking around is what a Netstalker wants - after such a catch, the said Netstalker will either store the gathered intel in their own database, share it in a close circle of like-minded individuals, or might even collect some more data and just sell it on the Darkweb. This will not happen however, because all the vulnerabilities that were mentioned are not so easy to exploit while also getting away with it - Shodan, as any self-respecting website, logs all the activity that transpires on its premises, and in the case of misconduct, can provide the information to court, and all the experienced users of the web know that this is the rule for any OSINT platform, that is run by "someone else".

In the end, Shodan can be considered as an awesomely effective addition to any Netstalker's arsenal, and a great tool in general, as it will not only help gather intel about targets or about general trends on the web, but will also help understand the frame upon which network searches can be made, and what kind of data can be looked up using other, less centralized tools.

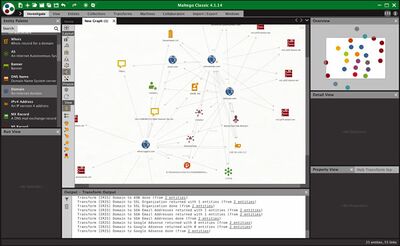

Maltego

Maltego is an open-source visual intelligence and forensics tool developed by a company named Paterva in 2007.[14] Its purpose is to mine and gather information on a large scale in real-time. Said information is represented as a visual node-based graph, by making patterns and multiple order connections between information easily identifiable. Maltego’s advantage over other OSINT tools is how well it manages to display the gathered data.[15]

Maltego can get a lot of information about a variety of different targets.

Maltego extends its data reach with integrations from over 30 various data partners including Pipl, CipherTrace, ServiceNow, Splunk Enterprise, Orbis, Intel 471 and more.[16]

Use

Maltego is very commonly used by enterprises, security researchers and private investigators.[17]

In cybersecurity operations or security operation centers, Maltego is both used by tier 2 incident response and by tier 3 threat intelligence analysis.[17]

Maltego is also used by international, federal, and local law enforcement agencies for monitoring and catching criminals all around the world. For example, the German Criminal Police or BKA actively use Maltego for their investigations.[17]

Setup

Regardless of what license you are planning to use, installing the tool is the same. It can be downloaded from their official site. The application is available for Windows, Linux and MacOS. As Maltego is based on Java, you are required to have Java version 8 or higher regardless of what operating system you are on.[18]

- Windows - the installer is available both separately or bundled with Java. After download, you can just run the installation EXE file which will take you through an installation wizard. If you downloaded the bundle version with Java, you will first get instructions on how to download the JRE.[18]

- Linux - if you are using Kali, then Maltego comes preinstalled and can be found under Information Gathering and maltegoce. If you are using another Linux distribution that doesn’t have Maltego, then just download it from their site. After downloading, you simply have to extract the zipped tarball to your preferred directory and run the Maltego executable directly from the bin folder.[18]

- MacOS - after downloading, just run the installer as any other file. You will be prompted with an installation window where you simply have to drag the application to the installation path.[18]

When launching the application for the first time you will be prompted to select one of the five different licenses. After that, you have to agree to the terms and licenses which is followed with either a login or an account creation process. Next, it will automatically install the Transforms based on your license. Transforms can be viewed and customized in the Transform hub.

Licences

Maltigo offers various versions of the software. The differences being in the amount of information that can be gathered from the target.[19]

Maltego community edition (CE)(Free) - In Maltego CE (Community Edition) the community transforms will be installed and can be run to generate graphs, but the features are limited and the resulting graphs may not be used for commercial purposes. Works fine for standard penetration tests. Only 12 Entities returned per Transform.

Maltego CaseFile (Free) - In Maltego CaseFile graphs can only be created manually, no transforms may be run. More types of entities will be installed and the resulting graphs may be used for commercial purposes. Allows for offline investigations.

Maltego One (Paid) - Maltego One is the new unified solution to access and activate Maltego plans for Professionals and Enterprises.

Maltego XL (Paid) - Maltego eXtra Large is Paterva's premium solution to visualize large data sets and allows up to 1 000 000 Entities on a single graph.

Maltego Classic (Paid) - Maltego Classic is a commercial version of Maltego which allows users to visualize up to 10 000 Entities on a single graph.

Entities

In Maltego, small data points are referred to as Entities that are used to map and identify targets. Maltego provides an extremely wide range of Entities for tracking down people, malware, dates, cryptocurrency, domains, companies, and much more. With gigantic maps, keeping track of entities is easy as everything is visualized and every Entity group has its own icon.[20]

Transforms

Transforms are small pieces of code that automatically fetch data from different sources and return the results as visual Entities in the desktop client as a graph. Transforms are the central elements of Maltego which enable its users to unleash the full potential of the software whilst using a point-and-click logic to run analyses.[21]

The process of executing the code that generates more Entities is referred to as “Running a Transform”. None of the Transforms on the CE version are ever executed on the host but on the Transform Servers. This means that running a Transform implies requesting the Transform Server to execute the piece of code or Transform, on your behalf. The paid version on the other hand has options to self-host the servers.[22]

Exploratory Link Analysis in Maltego is all about starting with the bits of information that we already have, and through running Transforms we explore the relationships that this information has with other as of yet unknown pieces of information. It also means identifying and establishing relationships between Entities on your graph that you may not be aware of yet.[22]

Running Transforms

To run a Transform, you first need to add some kind of information to the graph to start off with. This is done by identifying the type of information that you have in the Entity Palette and dragging that Entity onto the graph panel. Maltego will enter a default value to the Entity that you can change. For example, if you drag an email Entity onto the graph the default will be a sample email like info@paterva.com. By right-clicking on the Entity or simply viewing the RunView we have different kinds of Transform options, which when run, will return one or multiple Entities depending on what Transform is being run and will display it on the graph. Added Entities will have arrows indicating the relationships between the different Entities. When opening the Transform context menu on an Entity, all the Transforms will be grouped based on the source and type of information it will return. The groups will also vary based on what Transforms you have downloaded in the Transform Hub. Running a specific transform is done by clicking on the play arrow in the same cell.[22]

Transform Hub

All transforms are displayed in the Transform Hub, where you can update, refresh and add your own Transforms. The Transforms that will be pre-installed and available to you depend on the license you are using and on older versions will be displayed in light gray.

Examples of Transform partners

- Blockchain.info (Bitcoin): tracking bitcoin transactions through the bitcoin blockchain[23]

- Cisco Threat Grid: performs dynamic analysis of hundreds of millions of samples per year, indexing the indicators (Domain, IP, URL, Hash, Mutex, File Path, etc) from each analysis.[24]

- Have I Been Pwned?: used to identify security breaches and password leaks for users' security.[25]

- Shodan: a search engine that gathers data from internet-connected devices by IP. These connected devices are queried for various types of publicly available information.[26]

- PhoneSearch: typically used by Law Enforcement in the US to look up phone numbers in time-sensitive matters.[27]

- TinEye CE: used for reverse image searching[28]

SpiderFoot

SpiderFoot is an open source automated intelligence tool written in Python 3. Its purpose is to automate the process of collecting information about a given target. It sends queries to more than a hundred open databases. The data collected from SpiderFoot will provide a wide range of information about your specific target. It provides a clear understanding of possible hacker threats that lead to vulnerabilities, data leaks and other important information about what you or your organisation might have exposed over the Internet. Thus, this information will help you use the penetration test and increase the level of warning of threats before the system is attacked or the data is stolen. You can target the following entities in a SpiderFoot scan:

- IP address

- Domain/sub-domain name

- Hostname

- Network subnet (CIDR)

- ASN

- E-mail address

- Phone number

- Username

- Person's name

- Bitcoin address[29]

SpiderFoot is a modular tool, so various data sources can be disabled or enabled as needed. Based on the results obtained, you can create visualizations to help you analyze the data. The instructions explain quite clearly how to install the tool on most platforms. Although the instructions only mention Windows and Linux, it can be installed and used on MacOS as well. Unlike its analogue Maltego, the SpiderFoot interface is available in the browser and is completely free. [30]

Description Of SpiderFoot Operating Modes

SpiderFood has 3 options for triggering target scans:

- For a given case (3 subspecies):

"All" - all available modules are included, respectively, with the receipt of all information about the target. "Footprint" is an information collection mode based on information about a target provided by the Internet. "Investigate" - the mode of collecting information about suspicious malicious IP (for example, obtained from logs).

- According to the necessary data received (for example, according to "Account on External Site" and / or "Hosting Provider", etc.).

- By modules ("SHODAN", "Spider", etc.)

SpiderFoot Installation

First, we install the dependencies:

pip install lxml netaddr M2Crypto cherrypy mako

Download the archive from the official site, unzip and run:

./sf.py

Now the interface is available at http://127.0.0.1:5001

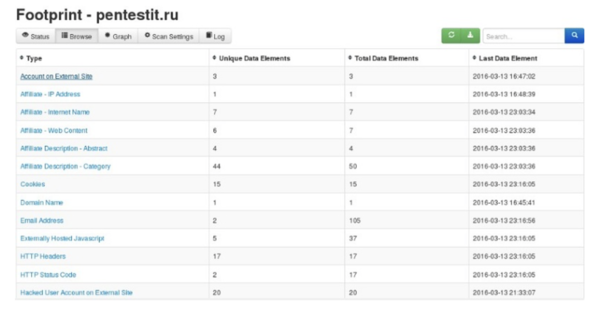

Scanning example

Mode: By Use Case,

Footprint Title: "Footprint - pentestit.ru"

Purpose: pentestit.ru

Result:

By clicking on the "Graph" tab, you can configure the display of a graph with a visual representation of the scan results (in this case, the forced ordering mode was selected - the "F" button), saving the results to a file is also available:

Examples of SpiderFoot modules

- Abuse.ch: Check if a host/domain, IP or netblock is malicious according to abuse.ch.

- Account Finder: Look for possible associated accounts on nearly 200 websites like Ebay, Slashdot, reddit, etc.

- SHODAN: Obtain information from SHODAN about identified IP addresses.

- Keybase: Obtain additional information about target username

- Instagram: Gather information from Instagram profiles.

- Bitcoin Who's Who: Check for Bitcoin addresses against the Bitcoin Who's Who database of suspect/malicious addresses.

- Amazon S3 Bucket Finder: Search for potential Amazon S3 buckets associated with the target and attempt to list their contents.

- AdBlock Check: Check if linked pages would be blocked by AdBlock Plus.

- BinaryEdge: Obtain information from BinaryEdge.io Internet scanning systems, including breaches, vulnerabilities, torrents and passive DNS.

- Blockchain: Queries blockchain.info to find the balance of identified bitcoin wallet addresses.

- BuiltWith: Query BuiltWith.com's Domain API for information about your target's web technology stack, e-mail addresses and more. [31]

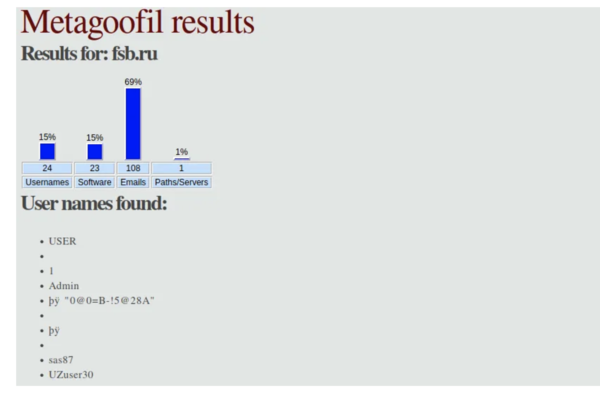

Metagoofil

The importance of metadata is often underestimated, however it can give us quite useful information about the names of users, the programs they use, the GPS coordinates of the image taken, the operating systems of the users, the time spent on documents, and much, much more. The fact, is that rarely anyone bothers to clean the metadata of documents. Theoretically, they can add automatic cleaning of the metadata of uploaded documents somewhere, but, actually not many people do this. For this metadata search we can use Metagoofil that was written in Python by Christian Martorella. Metagoofil is an information gathering tool designed for extracting metadata of public documents (pdf,doc,xls,ppt,docx,pptx,xlsx) belonging to a target website. There are two versions of Metagoofil. The version that is in the Kali repositories, by default, only downloads documents from the website, while the second, extracts the metadata and generates a report in the form of an html page. This metadata might be useful for pen testing, and could include information like the file name, size, the username of the author, the file location on disk, and so on. The second version, of course, is also able to download docs, however it often gives errors, as Google tends to block it due to the large number of requests. The latest version will also extract emails addresses from PDF and Word documents content. [32]

Metagoofil installation

In Kali Linux, Metagoofil (the first version) can be installed with the command:

sudo apt install metagoofil

Before starting to use, enter the command:

metagoofil –help

The last command will display useful parameters:

-h --help Show this help message and exit -d DOMAIN Domain to search for. -e DELAY Delay (in seconds) between searches. If it is too small, Google may block your IP address; if it is too large, the search may take a long time. DEFAULT: 30.0 -f Save html links to html_links_.txt file. -i URL_TIMEOUT Number of seconds to wait before timeout for unavailable / outdated pages. Default: 15 -l SEARCH_MAX Maximum search results. Default: 100 -n DOWNLOAD_FILE_LIMIT The maximum number of files to upload for each file type. Default:100 -o SAVE_DIRECTORY Directory for saving downloaded files. DEFAULT - cwd, "." -r NUMBER_OF_THREADS Number of search threads. DEFAULT: 8 -t FILE_TYPES File types to download (pdf, doc, xls, ppt, odp, ods, docx, xlsx, pptx). To find all three-letter file extensions, enter "ALL". -u [USER_AGENT] User-Agent to search for files by domain -d. If not -u = "Mozilla / 5.0 (compatible; Googlebot / 2.1 http://www.google.com/bot.html)" -u = Random User-Agent -u "My custom user agent 2.0" = Your custom User-Agent -w Download files, not just view search results. [33]

Example of obtained metadata:

References

- ↑ [1]"The 9-Eyes project"

- ↑ [2]"OSINT".Retrieved 29.04.2021

- ↑ [3]"History".Retrieved 29.04.2021

- ↑ [4]"Usage of OSINT".Retrieved 01.05.2021

- ↑ [5]"Risks using OSINT".Retrieved 02.05.2021

- ↑ [6]"Laws regarding OSINT".Retrieved 01.05.2021

- ↑ [7]"Framework". Retrieved 03.05.2021

- ↑ [8]"OSINT tools"

- ↑ 9.0 9.1 9.2 [9]"The Netstalking Handbook". Retrieved 12.03.2021

- ↑ [10]"Oldest repository of Nesca". Retrieved 05.05.2021

- ↑ [11]"The Nesca audit"

- ↑ [12] basic search fundamentals of Shodan

- ↑ [13]"Maltego interface". Retrieved 04.04.2021

- ↑ [14]"Paterva". Retrieved 03.04.2021

- ↑ [15]"Maltego introduction". Retrieved 04.04.2021

- ↑ [16]"Transform Hub". Retrieved 04.04.2021

- ↑ 17.0 17.1 17.2 [17]"Maltego usages". Retrieved 04.04.2021

- ↑ 18.0 18.1 18.2 18.3 [18]"Setup". Retrieved 03.04.2021

- ↑ [19]"Maltego licences". Retrieved 04.04.2021

- ↑ [20]"Entities". Retrieved 04.04.2021

- ↑ [21]"Transform definition". Retrieved 04.04.2021

- ↑ 22.0 22.1 22.2 [22]"Maltego Transform run". Retrieved 04.04.2021

- ↑ [23]"Bitcoin". Retrieved 03.04.2021

- ↑ [24]"Cisco Transform". Retrieved 03.04.2021

- ↑ [25]"Have I Been Pwned? Transform". Retrieved 03.04.2021

- ↑ [26]"Shodan Transform". Retrieved 03.04.2021

- ↑ [27]"PhoneSearch Transform". Retrieved 03.04.2021

- ↑ [28]"TinEye". Retrieved 03.04.2021

- ↑ [29]"KaliTools". Retrieved 03.04.2021

- ↑ [30]"SearchTools". Retrieved 03.04.2021

- ↑ [31]"SpiderFoot". Retrieved 03.04.2021

- ↑ [32]"MetadataAnalysis". Retrieved 03.04.2021

- ↑ [33]"Metagoofil". Retrieved 03.04.2021